Wan Chai, HK, April 03, 2026 (GLOBE NEWSWIRE) -- As AI agents get better at handling digital tasks, a new bottleneck is coming into focus: enabling their transition into functional, real-world execution

That is the pitch behind DeepMirror, a startup positioning itself as a runtime layer for physical AI. The company says it has integrated OpenClaw with a Unitree robot, marking an early step toward turning general-purpose agents into systems that can perceive, move, act, and recover in real-world environments.

Bridging the "Reality Gap"

The claim here is bigger than a single robot demo. DeepMirror is arguing that the next important control point in robotics may not be the model itself, or even the hardware, but the runtime that connects the two.

In its view, agents like OpenClaw are becoming increasingly capable at understanding goals, planning tasks, and invoking tools. But that reasoning doesn’t inherently translate into physical competence in a home, office, or other physical setting. A robot in the real world has to handle a very different class of problems. It needs to know where it is, what it sees, whether a task actually completed, what changed in the environment, and what to do when something goes wrong. It has to deal with moving people, blocked paths, failed grasps, and incomplete actions.

Unlike software, physical execution does not come with an easy undo button. That is the layer DeepMirror says it wants to own.

From Digital Workflow to Physical Execution

OpenClaw helped popularize a different interface for agents by moving them out of the terminal and into a more familiar conversational workflow. Instead of behaving like a developer tool, the system can be tasked in natural language, run for long periods, keep context, monitor jobs continuously, and push results back proactively.

But that type of agent architecture still mostly lives in the digital world. DeepMirror’s bet is that, for physical AI to become useful at scale, agents need a runtime that can translate high-level intent into closed-loop physical execution.

Rather than requiring the upper layer to manage locomotion, perception, motion planning, or hardware-specific control logic, the company wants the agent to issue a goal and let the runtime handle the rest. In practical terms, that means an upper-layer agent should be able to say something like “go check whether the stove is off,” or “bring me the item on the table,” without needing to understand SLAM, sensor fusion, odometry, or action sequencing at the hardware level.

The Four Abstractions of Execution

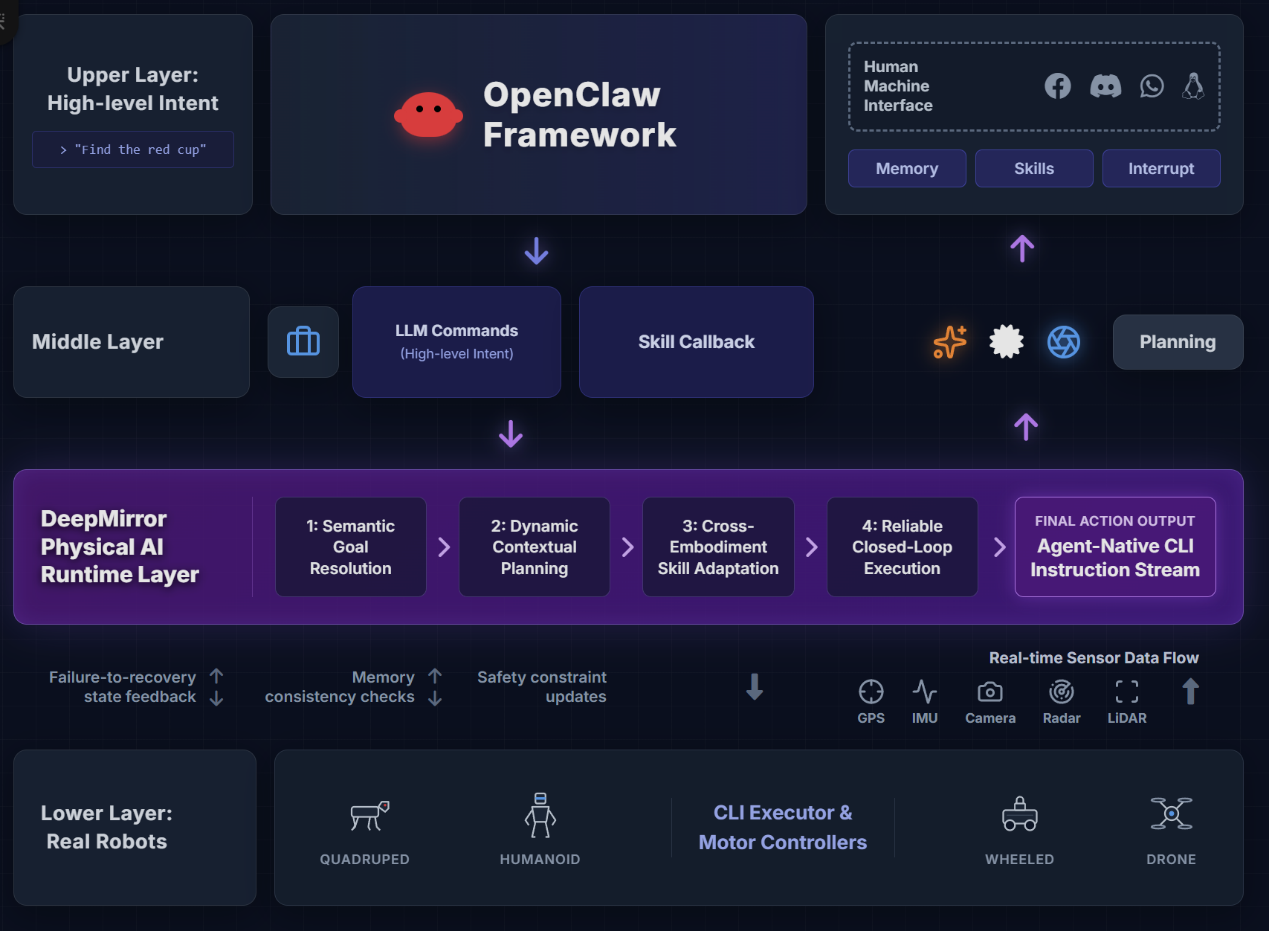

DeepMirror describes its architecture as a stack beneath the agent runtime. At the top sits OpenClaw, which handles intent, planning, orchestration, and tool use. Underneath is DeepMirror’s physical runtime, which handles execution in the real world. The company groups that execution layer into four abstractions:

- Semantic Understanding: Translating natural language intent into actionable machine goals.

- Spatial Mobility: Navigating dynamic environments with moving obstacles.

- Dynamic Action Generation: Handling object manipulation in real-time.

- Cross-Embodiment Support: Allowing the same agent logic to run across different robot hardware, from quadrupeds to humanoids.

In other words, it wants to let one upper-layer agent logic run across multiple kinds of robots without forcing developers to rebuild the entire system for each hardware platform. If that works, it would make the runtime layer strategically important.

Reliability and Memory

A lot of robotics software today is still tightly coupled to a specific machine, specific sensors, or a narrow task flow. DeepMirror is trying to make that layer more general-purpose. The company says its runtime is designed to make physical execution observable, interruptible, and recoverable, while preserving state and safety constraints during task completion.

It is also emphasizing memory. According to the company, its system combines a live cognitive layer, spatial memory, and temporal memory. The idea is to give the agent more than a one-shot perception pipeline. Instead of just recognizing an object in a single frame, the system keeps track of where objects are, what happened earlier in the task, why a prior action failed, and how the current environment relates to prior attempts.

That matters because a lot of what breaks robotics systems is not the first action. It is everything that happens after the environment changes.

An Agent-Native Control Protocol

At the control level, DeepMirror says it has built what it calls an "Agent-Native Robot Control Protocol." The company frames it as a goal-driven execution system rather than a direct command system. Instead of sending raw motor instructions from the top, the agent sends intent, constraints, and context, and the runtime resolves that into skills, modules, and hardware actions while maintaining feedback loops and rollback paths.

The Strategic Middle Layer

That framing is increasingly relevant as more AI companies start to look beyond browser automation and coding assistants toward robots, devices, and other real-world systems.

The broader market question is whether the winning layer in physical AI will be the foundation model, the robot maker, or the execution stack in between. DeepMirror is clearly betting on the third option. The company’s Unitree integration is still early, but it points to a larger ambition: becoming the runtime that allows general-purpose agents to operate reliably in the physical world, regardless of what robot body sits underneath.

If AI agents are going to move from helpful software to useful physical operators, that middle layer could end up mattering a lot.

YAN QINRUI qinrui.yan@looper-robotics.com